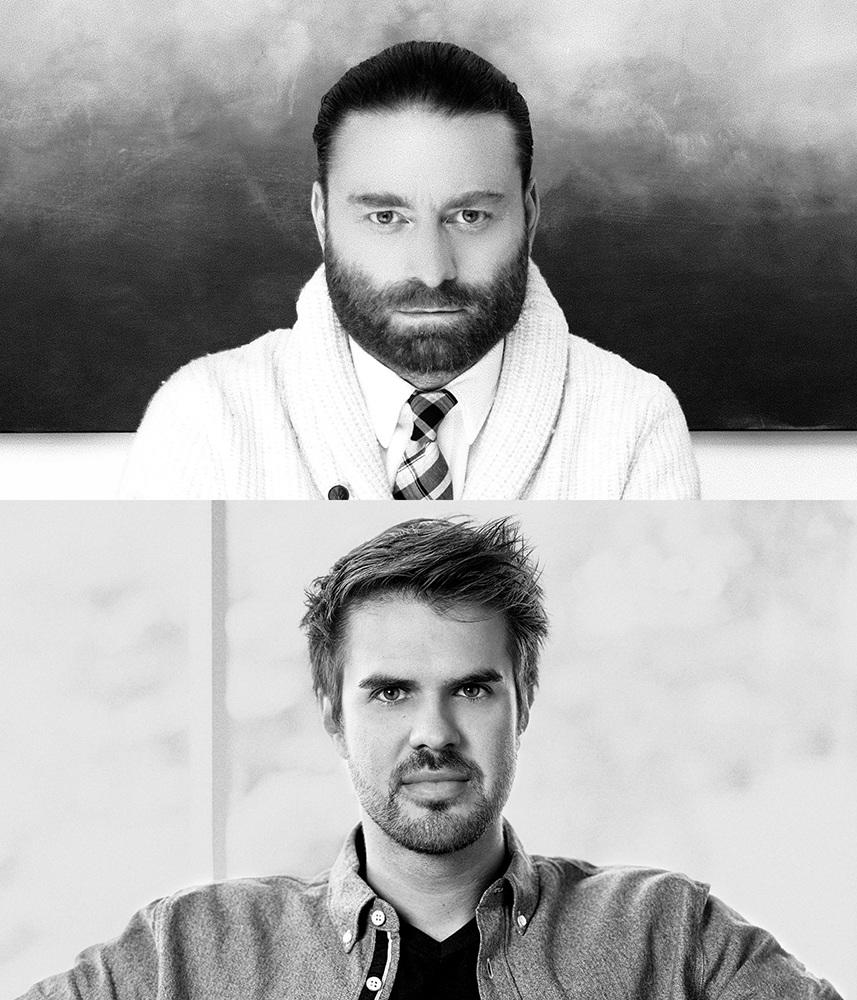

Life of Us creators Chris Milk and Aaron Koblin are LA-based VR innovators known for “The Wilderness Downtown,” an interactive Arcade Fire video that won a Grand Prix at the 2011 Cannes advertising awards.

Over the years, the two have put together a string of ground-breaking projects that blend cool technology and consumer entertainment. Today, the pair collaborates with organizations like Apple, The New York Times, Vice Media, and the United Nations.

Their brainchild is WITHIN, a production and distribution company of more than 35 whose mission is to define VR as a new medium for experiential storytelling and use it to seed empathy. “Virtual reality is the ultimate empathy machine,” Milk says. “It allows you to connect on a real human level, soul to soul, regardless of where you are in the world.”

Life of Us is WITHIN’s first prototype for what a shared VR experience looks and feels like.